Robotart

MISERABLE (arousal=LOW, valence=LOW)

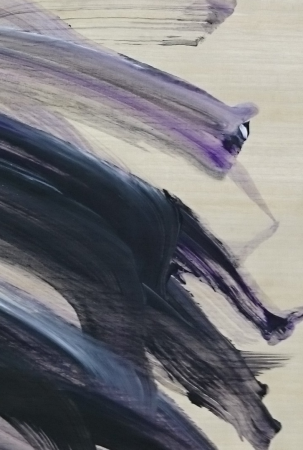

To express a miserable feeling detected in a person's brainwaves, Rob Boss painted long low-energy purple and black lines, with acrylics on a 50.5 x 76cm piece of bamboo paper.

Category: Original artwork

MISERABLE (arousal=LOW, valence=LOW)

Emotion recognition was conducted using an Emotiv Epoc+. Electroencephalography (EEG) signals were captured from four channels on the frontal lobe, and filtered into three bands: Theta (4-8Hz), Alpha (8-12Hz) and Beta (12-30Hz). Mean log-transformed brain wave power values were extracted for each of the four channels and three bands, and used as features by a k-Nearest Neighbor (kNN) algorithm to estimate levels of arousal and valence. Levels were then sent via User Datagram Protocol (UDP), as numbers in a range from -1.0 to 1.0, to a desktop program which used a simplified model to associate emotions with visual design elements: some simplified rules obtained from the literature (e.g., from Ståhl's model) and inferred from sample sketches drawn by the artists on our team (Dan and Peter) were modified to allow interpolation within Russell's circumplex model of emotions and incorporate some variance, in planning background and foreground colors, as well as styles, positions, and angles of "mood lines". Based on the computed composition, commands were sent to the robot's internal computer to direct Rob Boss to paint using a paint brush on its left arm and sponge on its right arm. (Each arm featured seven degrees of freedom (DOF) comprising various sensors and spring-based actuators for safety; the humanoid embodiment (approx. 100cm long x 80cm wide x 180 cm high), allowed a working range similar to that of an adult human, and we feel provided a sense of human-like presence).

External links

Newspaper report (Swedish)A description of the robot art demonstration on April 12, when Rob Boss painted "Miserable"

Comments

Log in or sign up to post a comment.