Robotart

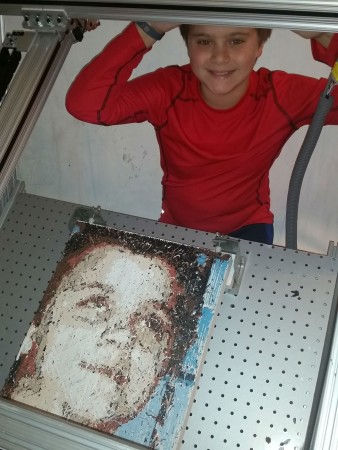

Hunter (Automated Robot Portrait)

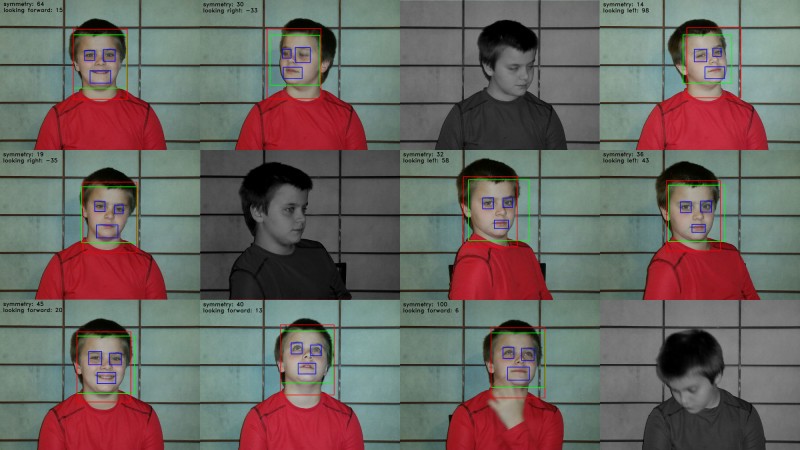

(Video has following text with imagery - suggest you watch that instead of reading this.) Though I typically work with my robots to create art, they can paint completely on their own. Here is a portrait I made with my son, well I am not sure we can claim that, because while many aesthetic decisions were made in its creation, few if any came from us. The only decision we made was to sit down for some photos. From there the AI picked its favorite photo and calculated an original composition. It did this by using OpenCV and the Viola-Jones algorithm, to find a face in each photo. If it couldn’t find a face, it disregarded the image. It then attempted to find two eyes and a mouth using a Haar Cascade. Once it located those, it scored the faces symmetry by calculating things such as whether the eyes were the same size. The robot would then pick from among the top scoring photos and crop it according to the direction it thought the face was looking. With an original composition in memory, the robot then used k-means clustering to calculate an underpainting and color palette - and it began to paint. But not blindly. It decided what to paint by comparing a photo of the canvas to the image it was painting and concentrated on the most different area. It did this over and over again with each stroke in a feedback loop, first for the underlayer, then for the full color palette. It also used feedback loops to make one of the most important decisions of any piece of art, the decision to stop. Once it realized that additional brushstrokes were no longer making the canvas look more like the image it was painting, it decided it had done its best and turned off. This whole process is very similar to how I paint many portrait commissions, and in some cases its exactly the same.

Comments

Log in or sign up to post a comment.